This story begins on Tuesday, February 12, 2019 – at the time, I was taking six courses, going for driving classes, writing a math book (that project’s dead now), and hanging out with friends (Hi Maryam!). So of course when I received an email about a competition, I decided to participate.

This wasn’t the only contest I was preparing for: Dr. Qadah was telling me they needed to send a team to Oman for 4 days, and that since I was the only one who had been attending the training last semester, I was one of their best shots. I was flattered, so I brushed up on my data structures & algorithms skills and registered for the local round.

Since I’m a member of every club at AUS, my inbox gets cluttered with announcements for every event that happens on campus. I like to know everything that’s going on and this is one way of keeping an information network alive. I joke that I’m only interested in the catering – it’s actually possible to set up a mail filter that detects “free food” using supervised machine learning methods like Naive Bayes classification, but I’ve been too lazy to implement one so far. So if it had just been an email, I may have missed it.

Thankfully, there was a person-sized poster that had been put up in the engineering building.

I scanned the QR Code, which lead me to a website that hosted a presentation.

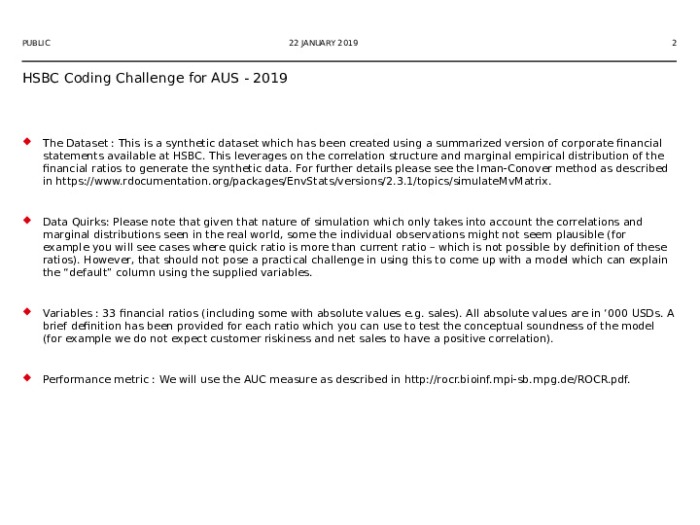

Reading about the problem made me sure that this was the sort of thing I was interested in, so I sent an email with my name, ID, and major. Two days later, they sent me the material:

I’ve blocked out the emails of the other participants to give them their privacy, but I could easily click “show details” to check the list and find out how many competitors there were (about 30). There were alumni and graduate students in the list, so I thought I’d be happy to make it to top 10.

I was a little surprised that they were able to send the file over email, because if you can do that then your dataset is trivially small. It was a tiny 5.2 Mb excel file – for comparison, the dataset for the OMG data science challenge was 7.5 Gb. 10,000 observations sounds like a big number, but in deep learning dealing with billions of records is not unusual. Usually we use a distributed file system like Apache Hadoop to deal with data on that scale, which is overkill for anything that you can fit in memory.

I’m not trying to say that small problems aren’t interesting. But they’re definitely much less resource intensive. If you want to do “grown up” machine learning, at some point you’re going to need some beefy hardware. For short bursts, it’s most cost-effective to rent compute on someone else’s server using cloud services like PaperSpace, AWS, or FloydHub. For more sustained use, investing in a GPU makes senses if money isn’t a big deal. Of course, if you’re a big company, then it’s economical to buy whole farms of GPUs, but no one makes decisions like that based on advice they read on some teenager’s blog.

I didn’t have to worry about any of that. I looked up “Probability of Default estimation” on wikipedia, and the page had links to eight or so different methods. I also checked out research papers to look for previous work and recent breakthroughs. Yeh, IC & Lien, C (2009) compared six methods and found that artificial neural networks had the best performance (with respect to a metric called the “area curve”). However, my dataset was smaller (9968 vs 25,000 observations), more unbalanced (3% vs 22.1% default), and higher dimensional (33 vs 23 variables). In fact, where my features (columns) represented different financial ratios for certain companies, their features represented categorical data (gender, education, history of past payments) and non categorical data (bill amounts) for different credit card holders. Therefore, I had to be careful as these differences meant that our results could differ significantly.

I continued to do research. I looked at book chapters, comment threads, software documentation, lecture notes, blog posts, and even quora (the horror!). This was a ton of fun. Actually, I could have gotten away with skimming through the more mathematical content, but this is what I enjoy, so I tried to understand it. It is important to have a good grasp of the uses and limitations of our tools.

I never ran into problems that some of my peers were struggling with simply because of how careful I was at the earlier stages. I did some basic analysis of the dataset using tools built into Excel, which revealed characteristics that I had to take into account. For instance, there were some records with missing entries, so I knew my solution had to be able to deal with those. Of course, this is the bare minimum when it comes to inspecting data, but you’d be surprised at how often people forget to pay attention to these things. Little mistakes make big problems.

Before sitting down to write the program, I sketched out what I was going to do. This was different from what I ended up doing, because whenever I ran into trouble and got stuck I pivoted to trying something else. However, the planning was still necessary as it helped me get an idea of the structure of the solution. First I needed to get the data into the program, then I needed to run some computations on it, then I needed to write that data back out in the format they wanted. This is super general – it doesn’t constrain the specific approach you take, but any successful procedure has to satisfy these criteria.

For getting the data into the program, I wanted to save the excel file as a .csv (comma separated variable) file and then work with the .csv file. Making the .csv file was no problem – I literally pressed “Save As” and changed the file type in the drop down menu – but I was having trouble doing computations with it. I don’t remember what the problem was, exactly: I’d imported the csv module, opened the file, made sure to explicitly cast the numbers to floats, and read it into an object (don’t do this if you have a huge file lol, but on my system a python list can theoretically hold up to 9,223,372,036,854,775,807 elements [practically speaking it would induce thrashing and make operations too slow to be useful, better to use something more efficient like numpy, and do binning/clustering, and run-length encoding, and split it into subsets, and do out-of-core execution, or use one of ANY NUMBER OF TECHNIQUES ]).

Anyway, using .csv files was not working for illegible reasons. Guess I’ll die ¯\_(ツ)_/¯.

I had to find another way to get the data in. I half-remembered some tutorials I’d done ages ago on Automate the Boring Stuff with Python which involved working with Excel spreadsheets, so I loaded those up and messed about with the example code till it did what I wanted it to. This was fairly straightforward. I ran into a snag with the OpenPyXL module when it turned out one of the functions had been deprecated (removed in the current version, with a helpful error message), but digging into the docs helped me find a replacement easily.

Keep in mind that at this point I’d written about 13 lines of code.

Programming is hard, okay?

I’ve noticed that I never explicitly mentioned that I was using python. I took it as a given, because what else would I use? R? If I was using R, I would definitely mention it, if only to show off that I knew R (I don’t), but python is straight up mundane. I opened Anaconda Navigator, launched Spyder, and just wrote small scripts and tested them in the console. Nothing special. Not even SQL.

Next I had to process the data. The easiest and simplest way to do this was to use scikit-learn. If I was cool I would have used tensorflow. Sadly, I am not cool. I used scikit-learn and enjoyed it.

I made it a mini competition, throwing different models at the data and looking at how they performed. Scikit-learn has a function called roc_auc_score. Being given the privilege to be this lazy gave me a feeling that was comparable to drinking half a glass of cold orange juice. Sublime.

The winner was a technique called logistic regression. Reading more about it, I could see how it was perfectly suited to the problem at hand. I wrote the rest of the code to use the model to generate credit scores and write them back to the Excel file, and so I was ready to submit ahead of schedule.

All the programming was done on February 19th.

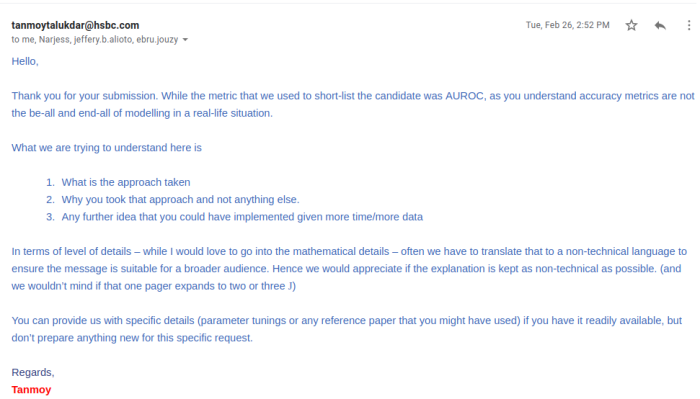

A week later, on February 26th, I got an email saying I’d made it to the top ten.

I was ecstatic. I started singing and dancing when I came home, “I got shortlisted, I got shortlisted!”. This was pure joy. I got less happiness from winning than I did from knowing that I was good enough to make something that worked without bursting into flames. That I wasn’t a complete incompetent failure who could never utilize his “knowledge” to solve problems. That there was a piece of evidence against the claims made by the belittling, vicious, cruel voices in my head. That for one moment, all my insecurities were wrong and I had actually done well.

Now I had a model and some predictions. Was I done? No. Now I went to Zain.

Zain is my best friend. He’s a double major in finance and accounting, and he’s a genius at both of them. I want to go into a long tangent about his accomplishments, but then he would end up stealing the show, so take it at face value when I say he’s ridiculously skilled.

I arranged to have dinner with Zain. I brought my laptop with me. I explained what I’d done, and showed him what my model “thought” were the important financial ratios (and whether they were “good” ratios or “bad” ratios). Of course, models don’t have thoughts, they have coefficients you can understand using the likelihood ratio test, and “good and bad” is a shorthand way of referring to ratios that are positively and negatively correlated with the probability of default. He looked over it, scrolling, asked me a few questions, stared at it, and then nodded his head.

Zain is fast.

I had to protest. Come on. That wasn’t nearly enough time to decide either way! Did you check all the ratios? There are 33 of them! And they have formulas! How can you know?

And then he started talking.

When Zain talks finance and accounting stuff to me, I try my best to listen and understand, because that’s what I would want someone to do when I talk CS and Math to them. As hard as I try, I inevitably zone out, and his speech becomes an incomprehensible string of “liability quick ratio loan asset amortization cost equity profit net working capital turnover debt”. He was explaining why he thought everything was right, but for all I know he could have been making it up. Sometimes I check online after one of our conversations to see if this is a long term prank he’s pulling, but if it is, then he’s been dastardly enough to change the webpages so they say what he’s saying too.

Another thing we discussed during dinner was how to write the report. I’d sent an email earlier asking for clarification immediately after getting the message, and gotten a reply really quickly.

After dinner, Zain forced me to drink a can of coffee, which meant I couldn’t sleep that night and I ended up finishing the writeup as well (available here). I spent hours agonizing over word choice, formatting, organization, phrasing: I would go to bed only to get up again because I had something I needed to write. I had a draft, so I wasn’t working from nothing, but it was still a hyperfocus frenzy.

I didn’t come to university the next day. I slept. That was Wednesday.

Saturday was the gulf programming competition (remember the second paragraph in this essay? The reason I was doing these things so fast was because I had a dozen other things going on at the same time). When I woke up, I was just too exhausted and overwhelmed to go, so I decided not to. The hardest thing I do every day is waking up and getting out of bed. Some days I’m too weak to do that. I felt terrible, like I was letting Dr. Qadah down, that I was wasting all the time I’d invested into training, that I was failing to live up to my potential. That I wasn’t doing everything I could. But pretty soon I’d be forgetting all of that.

The next day I got an email saying I’d won first place, and that I was invited to HSBC Tower to meet with some officials and go through a certification ceremony. It took some time for us to finalize the date, but it was worth the wait: HSBC Tower is a cool building, and the view from the floor we went to was as breathtaking as Keanu Reeves. I really enjoyed talking to the people there, and proving my skills to the other two winners (master’s students Mohammed and Motamen, who also worked as my TAs for the computer systems lab) meant I had the connections to form a team for the OMG MENA Inter-University Data Science Competition, where we ended up placing first in the national round and third in the international round. Which was in turn a stepping stone to even greater things.

Final reflections:

If you would ask me to rank my machine learning engineering skills on a scale where 0 is complete novice, then I would put myself at around a 3.

10 is a Goodfellow or Karpathy or Schmidhuber, 9 is a FAANG tier researcher, 8 would be an experienced professional who can deploy and maintain high performance internet scale models in production, 7 would be someone who has a PhD or equivalent experience, 6 would be someone who has won a Kaggle competition, 5 would be someone who can compete with a Master’s graduate from a reputable university, 4 would be someone who can do some of the many, many things I haven’t gotten around to. 3 is someone who can use a bunch of libraries and do some feature engineering and analysis with only a superficial understanding of what’s going on under the hood. 2 has some introductory math/programming experience, if not necessarily with machine learning. 1 has read about the subject, but doesn’t have a clue about what the technical stuff involves. I fully expect these ratings to change as I learn more, but this is a quick and dirty approximation.

I have a long road ahead of me. I’m just getting started on my journey and I don’t know where I’ll end up, but at least I know I like walking. Hopefully I can get to a 4 by this time next year, 5 in two years, 6 in five, and 7-11 at some point in my lifetime.

When the official announcement went out, I had a few people message me congratulations, which felt great. Dr. Hicham also got everyone to give me a round of applause at the end of systems, which made my brain break for a moment. It felt great to know that everyone was happy for me and wanted me to succeed. I hope I am able to help them achieve their success as well!